Neural Networks

How Computers Actually "Think": A Guide to Neural Networks

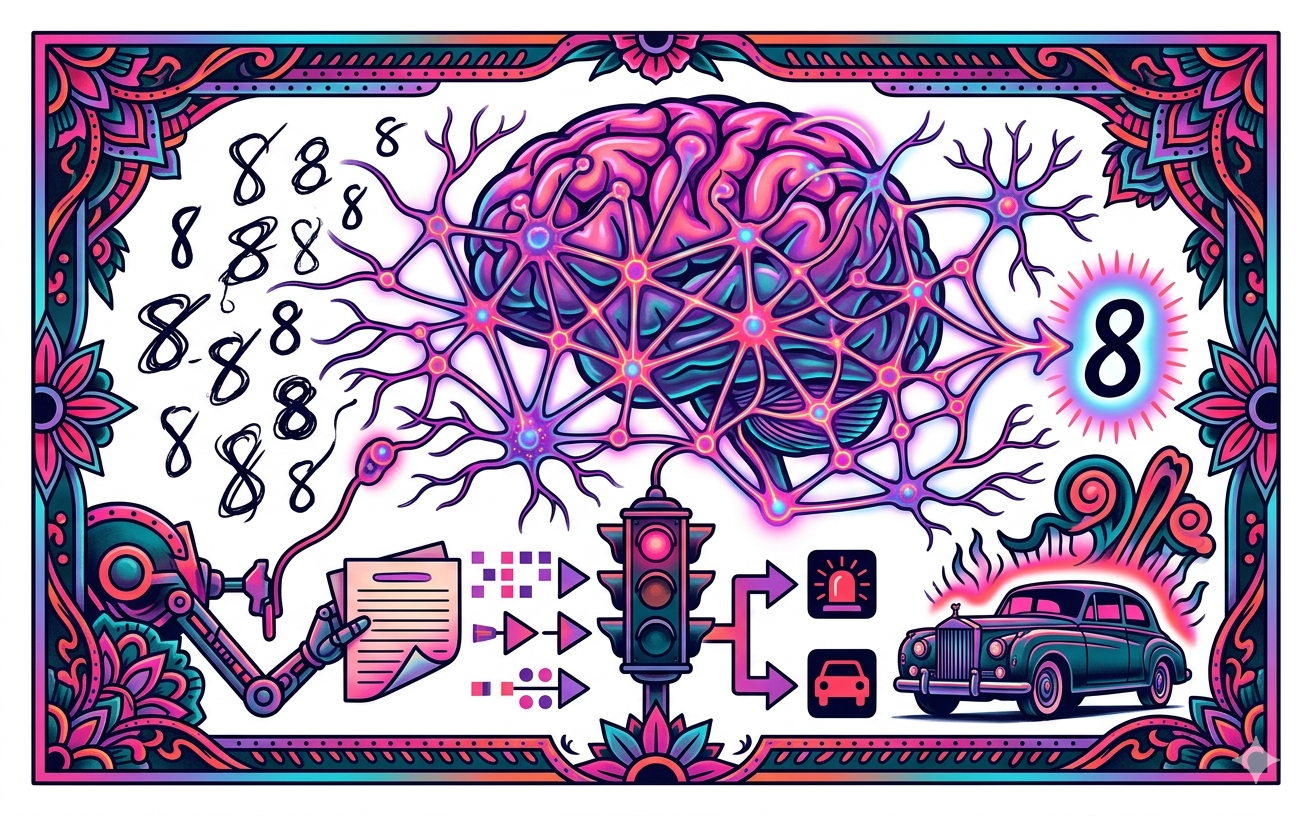

If you want to understand how a phone recognizes your face or how a car drives itself, you have to stop thinking about computers as machines that follow a list of rules. Traditional coding is like a recipe: "if the light is red, stop."

The real world is too messy for these recipes. You can't write a rule for every possible way a human can scrawl the number "8." Instead, we use Neural Networks. These are math models inspired by the human brain that let computers learn from patterns instead of instructions.

The whole thing starts with a single "unit" or an artificial neuron.

Think of this as a tiny calculator. It takes in some numbers as inputs, but it doesn't treat them all equally. It multiplies each input by a "weight." If a piece of data is really important, it gets a heavy weight. If it’s junk, it gets a light weight. The neuron adds those all up and throws in a "bias," which is just a extra number to nudge the result. Finally, it passes the total through an "activation function." This is like a gatekeeper. It decides if the signal is strong enough to pass on to the next part of the brain. If the math hits a certain threshold, the neuron "fires."

One neuron is pretty dumb, so we stack them in layers.

You have the Input Layer where the data enters and the Output Layer where the answer pops out. In between, you have Hidden Layers. This is where the magic happens. When you have tons of hidden layers, we call it Deep Learning. In these layers, the computer isn't just looking at pixels or numbers; it’s finding features. One layer might find straight lines, the next finds curves, and the next recognizes that those curves look like a human eye.

But how does it actually learn?

It starts out completely random and guessing like a toddler. When it makes a mistake, a "Loss Function" calculates exactly how wrong the guess was. Then comes "Gradient Descent." Imagine being on a foggy mountain and needing to find the valley. You can't see the bottom, but you can feel the slope under your feet. You take a small step in the steepest downward direction. The computer does this with math. It uses Backpropagation to send the error message backward through the network, telling every single neuron to tweak its weights just a little bit so the next guess is better.

A big danger here is Overfitting. This happens when the computer is too smart for its own good. It memorizes the specific training data instead of learning the general vibe. It’s like a student who memorizes the exact answers to a practice test but fails the real exam because the questions changed slightly. To stop this, we use "Dropout." During training, we randomly tell some neurons to take a nap. This forces the remaining neurons to work harder and learn the actual patterns rather than relying on a few "genius" neurons that just memorized the answers.

If the task is seeing images, we use a Convolutional Neural Network or CNN. Images are huge, and a regular network would get overwhelmed by all those pixels. A CNN uses "Convolution" to slide a filter over the image to find edges or shapes. Then it uses "Pooling" to shrink the image. Max-pooling looks at a small square of pixels and only keeps the brightest one, tossing the rest. This makes the data smaller and helps the computer stay focused on the important parts even if the object in the photo shifts a few inches to the left.

To Recurrent Neural Networks or RNNs, that is the Question...

Traditional networks have no memory; they treat every input like it’s the first time they’ve ever seen the world. RNNs have loops that allow information to persist. This is vital for sequences like sentences or videos. In a sentence, the fifth word depends on the first word. An RNN feeds its own output back into itself, creating a sort of short-term memory. This is how your phone predicts the next word you’re going to text or how Google Translate understands a whole paragraph instead of just translating one word at a time.

Neural networks basically turned computers from calculators into fast learners. By mimicking the way our own neurons fire and adjust, we’ve created machines that can see, hear, and speak. It’s all just layers of weighted math, but when you stack enough of it together, it starts to look a lot like intelligence.