Why AI Confidently Lies to You

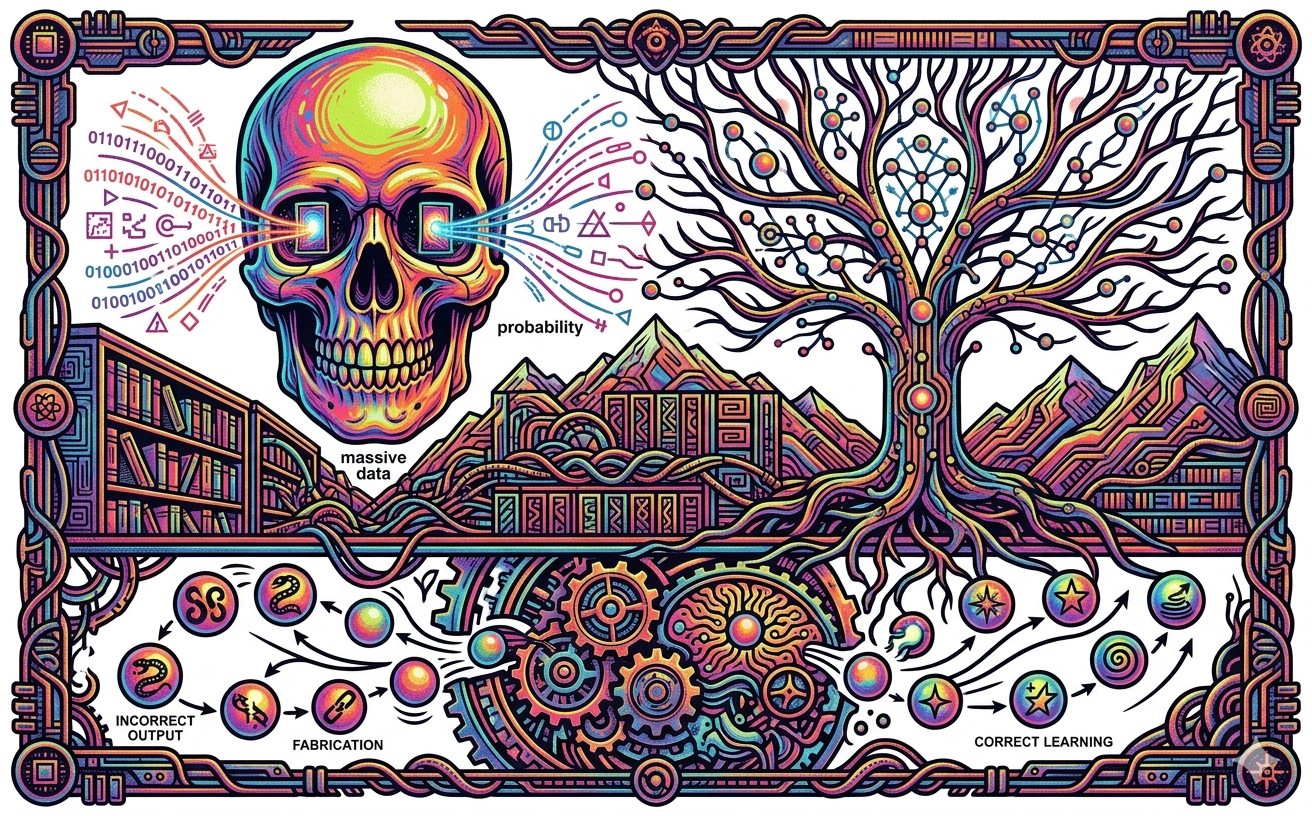

How AI Actually Works (And Why It Sometimes Confidently Lies to You)

If you’ve used ChatGPT, Gemini or Claude, you already know that AI feels like magic. It can write essays, generate hyper-realistic photos, and even write computer code in seconds.

But what is actually going on under the hood? Is there a tiny digital brain thinking inside your phone?

Not exactly. The truth is a mix of massive data, clever trial-and-error, and a whole lot of math. Here is the breakdown of how we went from simple computer games to AI that can hold a conversation.

The Old Way: Playing by the Rules

Years ago, if you wanted to build an AI to play a game like Tic-Tac-Toe, you didn't need it to "learn." You just needed to give it a massive list of rules. Programmers used simple "if/then" logic and decision trees. The code basically said, "If the human plays in the corner, then play in the center."

Using an algorithm called Minimax, the computer would map out every possible move, calculate the math, and choose the path that gave it the maximum points while giving you the minimum points. It’s why you can never beat a perfect computer at Tic-Tac-Toe.

But there’s a huge problem with this: it only works for simple things. Tic-Tac-Toe has a few hundred thousand possible game boards. A game like Chess has 85 billion possible configurations in just the first four moves. A game like Go has quintillions. Writing "if/then" rules for every single scenario is literally impossible.

The Game Changer: Machine Learning

Because humans couldn't write enough rules, we had to teach computers to figure out the rules themselves. This is called Machine Learning.

One of the coolest ways machines learn is through Reinforcement Learning. Imagine you are trying to teach a robot arm to flip a pancake, or an AI to navigate a maze in a video game. Instead of telling the AI exactly how to move, you just give it a goal. When the AI gets closer to the goal, you reward it with points (like giving a dog a treat). When it messes up, you penalize it.

At first, the AI just flails around doing random stuff. But over thousands of tries, it realizes, "Hey, every time I move the pan this way, my score goes up!" Over time, it perfects the action. To keep the AI from getting stuck doing the same basic moves over and over, programmers inject a little bit of randomness. About 10% of the time, the AI will try something totally new (exploring) rather than sticking to what it knows works (exploiting). This is how AI discovers insanely clever strategies that humans never even thought of.

Brain Math: Neural Networks

For AI to handle really messy real-world stuff—like recognizing handwriting, catching spam emails, or recommending your next Netflix show—it uses Deep Learning and Neural Networks.

A neural network is a piece of software inspired by the human brain. You have "inputs" on one side (like the pixels of an image) and an "output" on the other side (the AI predicting "this is a picture of a cat"). In between those are layers and layers of hidden math equations.

You train it by feeding it millions of examples. At first, the math is totally randomized. But as it processes millions of photos, the AI automatically tweaks its own math parameters—billions of them—until it starts consistently predicting the right answers. We don't even have to label the data for it anymore; the network just gets incredibly good at finding invisible patterns.

ChatGPT Is Just a Giant Autocomplete

So, how does this lead to ChatGPT? Modern AI chatbots use something called a Large Language Model (LLM).

To an LLM, words aren't actually words; they are giant lists of numbers. The AI breaks down entire sentences into these numbers and uses something called "attention" to map out how words relate to each other in a sentence. For example, it tracks the invisible connection between the word "Massachusetts" at the beginning of a paragraph and the word "capital" at the very end.

When you ask ChatGPT or Claude or Gemini a question, it isn't actually "thinking" about your question or researching the answer. It is simply acting like a super-powered version of the autocorrect on your phone. Because it has been trained on almost the entire internet (Reddit posts, Wikipedia, books, articles), its neural network is just calculating the exact mathematical probability of what the next most logical word should be, one word at a time.

The Catch: Hallucinations

Because AI is essentially just a giant probability machine guessing the next word, it has a major flaw: it can confidently lie to your face.

In the tech world, this is called a hallucination. If the AI is trying to guess the next word, and the math gets a little weird, or it was trained on bad internet data, it will just make something up. And because it's designed to sound natural and confident, it will sound totally convincing when it tells you something completely wrong.

This is why, even though tools like GitHub Copilot or Claude Code can write amazing code for you in seconds, you still have to know how to code yourself. AI is the ultimate cheat code for skipping tedious, boring work, but it still needs a human to oversee it, spot its weird mistakes, and point it in the right direction.